A long time ago in a building far away, I had a short career in testing. I was ok at it, read a lot about it, studied it a bit, and even wrote a bit about it.

Test automation was bad then, and it may be worse now.

A is for… Automation?

About eleven years ago, I released an e-book called “The A Word: Under the Covers of Test Automation”. The A in the title was, of course, for “Automation”, but I thought I was clever because the A and W also stood for Angry Weasel.

I’m not so clever.

The book was an edited set of blog posts I wrote in the late 2000s and early 2010s covering a handful of test automation topics. It’s a bunch of ideas on approaching test automation from a test design perspective rather than blindly automating test cases. As you’d expect from me, it’s highly opinionated. Unlike How We Test Software at Microsoft, however, this one has stood up better to time.

The book is available on leanpub - but they got rid of their free tier without a membership, so a free version (as pdf) are here if you want to browse a copy. If you buy a copy, all proceeds get donated to charity.

Updates?

I always had an idea that I’d update the e-book, but never got around to it. I remember attending the Google Test Automation Conference in 2014 where almost every single talk discussed “flaky tests”. I missed the boat by not adding a few chapters to that book on flaky tests and how to deal with them.

Just a few years earlier, a few of us on the XBox One team designed some pretty cool and innovative stuff for testing console applications that would have been fodder for an entire new book on automation topics.

And I always meant to write more about the Device Simulation Framework that I wrote about in Dorothy Graham’s Experiences of Test Automation. When I wrote that chapter / case study, I remember already being so-freaking-tired of of all of the articles on test automation that were all about automating user workflows instead of solving testing problems with code. Overall, I’m just really sick and tired of the infatuatation the software industry still has with UI automation.

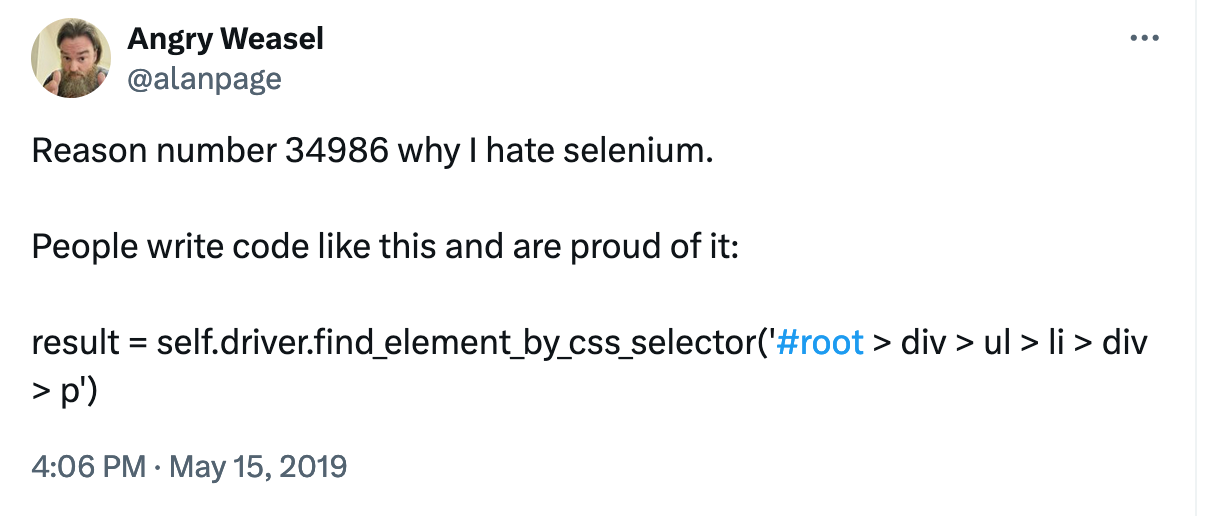

I’m still sick of it. I don’t read as many avoid testing articles today, because I just can’t handle another article of someone telling me how to use an obscure selector in Selenium to automate an edge case browser scenario that someone else told them to automate. But nobody cares. In fact, I took a lot of heat over this tweet back in 2019.

The fallout of this tweet…um X-thing(?) was an appearance by Jason Huggins on AB Testing where we talked about the huge problems that Selenium solves, as well as the huge problems it creates.

Write Different Code

By far, the best “test” code I’ve every written have been tools to help me diagnose code. Whether it was debugger extensions, or just flat out dumps of data structures, I’ve probably found more bugs quicker via diagnostic tools than by automating an application.

Yes, it’s fun to watch an automated mouse navigate an application and make it do cool stuff - but it’s a parlor trick. Ninety per cent of the bugs found in simple UI automation would have been found if someone just used the application for a minute (and you probably would have also found the tab layout bug that the “test automator” worked around).

Test Automation in 2024

Short story - it still sucks. In the last ten years, I’ve worked with a lot of development teams on improving velocity, and in every single case I’ve found that developers owning all test automation results in better tests, more testable code, faster deliver, and higher overall quality. Some of these teams don’t have dedicated testers, but on teams with dedicated testers, those folks do not write automated tests. They write tools to assist in testing or debugging, or work with the development team in order to improve testing knowledge across the team.

I obviously nodded my head when I read this line from the research-heavy and widely popular book, Accelerate.

It’s interesting to note that having automated tests primarily created and maintained either by QA or an outsourced party is not correlated with IT performance. The theory behind this is that when developers are involved in creating and maintaining acceptance tests, there are two important effects. First, the code becomes more testable when developers write tests. This is one of the main reasons why test-driven development (TDD) is an important practice—it forces developers to create more testable designs. Second, when developers are responsible for the automated tests, they care more about them and will invest more effort into maintaining and fixing them.

Yet LinkedIn, at least, tells me that “developers can’t test”, or worse, that '“developers shouldn’t test”. IMO, developers who can’t or won’t test should be unemployed developers.

The UI Automation Dilemma

There’s a bit of conundrum with where this story goes (meaning I have a strong opinion that few people agree with). I believe that developers should be writing the vast majority of automation - and all of it where possible. My experience and research shows that it’s just flat out better.

When developers write web automation with Selenium (or Cypress or Playwright), they undoubtedly write better code, and rather than use stupid methods to get at obscure controls, they make their code more testable so that their tests are simple, and the code is better designed. When those tests inevitably fail, I’d honesly just delete the test rather than chase down a cascade of selectors.

Several years ago, I was playing with TestCafe for some automation, and I took a few hours and wrote a small suite of tests for a set of web pages. It wasn’t horribly exciting, but it worked. Even better, when I ran it again 6 months later, it still mostly ran - but I did have to modify a few things, since the underlying layout changed just a bit.

It was still too much. Is it really worth it to constantly update tests over and over and over again?

The Devil?

I’ve been around long enough to remember when “record and playback” automation tools were absolutely awful. They created horrible “code”, and the tests were extremely flaky. That was then, and this is now. These days, record and playback is massively improved (via ML and other algorithms), and I think the cases for writing ui test automation code are limited. The rise of tools like Autify, Testim and others make repeatable and maintainable web automation simple. I took a few hours of time in TestCafe to do what I could have done in minutes with Autify. When (and if) that recorded test fails to run properly, I can take a few minutes to re-create the test.

The dilemma comes in two flavors. The mistrust of record and playback tools is fair. They have a history of being really horrible. The other flavor is that the developers I know like to write code. Now that we’ve given them Playwright and similar tools, the now test-infected developers enjoy writing web automation to help ensure their code is working as designed. I think writing ui automation is a waste of time, but that’s just me.

I haven’t won this battle, but I will keep fighting it.

There are still a lot of misconceptions around test automation. Ten-ish years ago, I reviewed a paper on test automation written by some testing “experts”. It was an extremely naive look at test automation full of misconceptions and bad ideas. The authors ignored my feedback and left my name on the reviewer list - a fact that someone points out to me every few months or so. Those authors - along with a whole lot of other people continue to look at automated testing incorrectly.

The Future State of Test Automation

The future isn’t complicated. It looks like this.

The developers of the code should write nearly all of the test automation. This includes UI automation (WHEN NECESSARY), as well as any other tests needed to give them confidence that their code is correct.

When you absolutely have to use UI automation, use advanced record and playback tools for UI automation. They will work better for most cases.

Write code to help you test and diagnose software. IME, those tools are more valuable than code that just makes the application move around.

Use people with testing expertise to help developers test better - via automation and other means.

A Penny for Your Thoughts?

Because my post is about testing, I expect a bunch of testers will tell me I’m wrong. I don’t mind that - being wrong is how we learn. I’m not describing as much of what I want to see as I am describing what I’m seeing today.

We’ll see what happens.

By the way, the puzzle continues.

-A 12:1

I love this post for so many reasons, but i specifically wanted to say that i got a great chuckle out of this line :

> (and you probably would have also found the tab layout bug that the “test automator” worked around).

I was lucky enough to have experienced these things first hand. Thoughtworks taught developers how to test their code, and delete their test code when it no longer served a purpose. I still think their developer training is some of the best I've ever seen a company put together. It's also what made them incredibly fast at building things.

You might or might not be surprised how much businesses will pay for folks like Thoughtworks to come in and build something, and also train the developers around them to build in a similar way, only for it to fall apart because management or the whole huge "QA" department pushes back on developers owning all the functional code. Then when times get lean, they can those QA folks and devs are left to figure out test code they didn't write and is often in a language they aren't using.

What's left behind is a little footprint of clean code that everyone can read and maintain, but can't remember how it was created and why it was so good. (Two major things were involved - unit tests, pair programming).

Still baffles me that companies don't require developers to know how to create unit tests, not even for TDD, but just unit tests period. You can almost predict the companies that wrote software that skipped this vital scaffolding will have it all come crashing down in five to ten years under the weight of technical debt they can't keep up with. (I've personally seen this happen three times.)

The last time I created any UI automation, it was 2018, for a company creating software that had no unit tests and no longer exists. I wonder how many other folks that work around test automation have seen this happen or even made the connection. Could be coincidence, but given data from Accelerate, it looks like correlation to me.